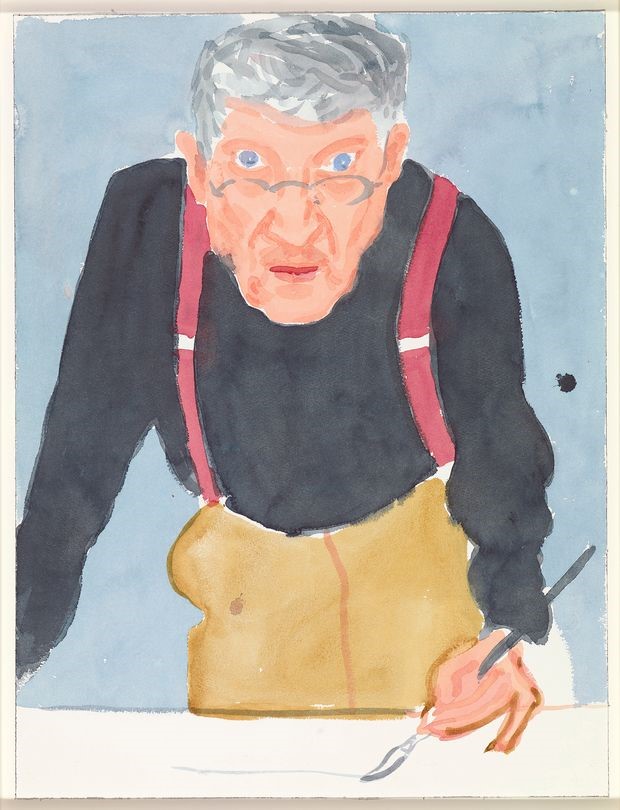

David Hockney Self Portrait with Red Braces. Photo by Richard Schmid

David Hockney is a lesson in aging gracefully, he is 83 yrs. old and exceptionally prolific. Hockney is a SuperAger and he is not the only one. “Beautiful young people are accidents of nature, but beautiful old people are works of art.” – Eleanor Roosevelt.

Growing old embodies a paradox, our bodies age while we stay mentally agile. Aging changes every bit of us, every organ, including the nervous system, but the brain’s aging process is somehow different. Muscles and joints, hearts and livers seem to wear out (not exactly; aging is a well-controlled cellular process). Remarkably, for most of us our brain doesn’t “wear out”, and in fact, resists cognitive loss that would prevent us from living gracefully all our lives. There are people in their 80s and older who maintain the cognitive ability of someone decades younger. They are cognitive SuperAgers. It is generally thought that a person’s memory peaks in middle age and then begins to decline. SuperAgers follow a different trajectory, seeming to age much more slowly. What makes these folks mentally resilient?

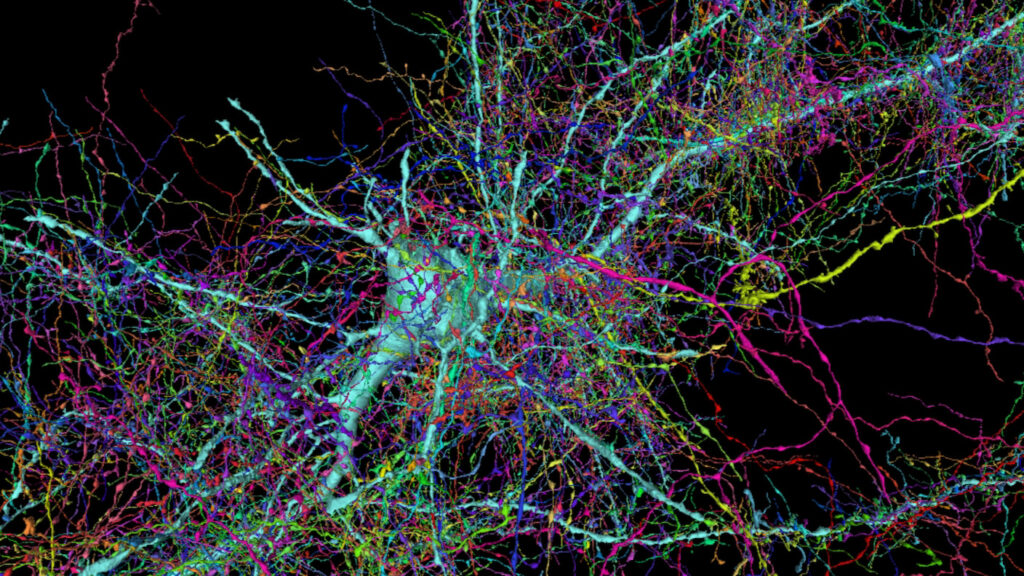

Age brings thousands of changes, some are obvious, but many others are subtle, cumulative, yet consequential. Metabolic processes run less smoothly, memories come less swiftly, DNA replication and repair become less accurate. Most organs do the same job throughout life, hearts pump, kidneys filter. The brain is notably different in exhibiting immense plasticity and adaptability. Cognitive psychologists like to say that we don’t wake up with the same brain we went to sleep with. As we age, it is true that neurons degenerate, die and are removed. The volume of the brain steadily shrinks as the number of nerve cells and the connections between them decrease. The great majority of healthy adults simply adapt to these changes and thrive as they age. The BBC produced an excellent video about neuroplasticity titled How to rewire your brain that provides a worthwhile context for our discussion.

During our first 25 years, neuro stem cells give rise to billions of neurons, each one making thousands of synaptic connections with other neurons to form networks. Some of these are trimmed as we mature, others grow stronger, but altogether, after adolescence the number of neurons and synapses begins to downsize. Kristin Kennedy at U.T., says, “Aging is a lifelong biological process,” There’s disagreement about when the brain starts to show signs of aging, some think it seeps in as early as 50, others say earlier, but either way it is clear that progressive rearrangement and lose is a normal part of becoming a mature, functioning member of the species and that it involves parts of the brain important to high-level functions like planning, emotion, and creativity. The brain, its chemistry and structure, changes all the time, a plasticity that underlies all the beautiful cellular machinery behind learning and memory, perception, individuality, and perhaps super aging and cognitive resilience, too.

Neurocircuitry at high resolution, a single cortical neuron and surrounding axons. Jeff Lichtman Lab, Harvard University. https://bit.ly/3eKYoHG

Claudia Kawas at UCI leads the longitudinal 90+ Study of more than 1,800 people age 90 and more (the fastest growing segment of the U.S. population). She confirms that atrophy is the strongest correlate of age. The brain of a 90-year-old typically weighs 1,100 – 1,200 grams, which is ~100 grams less than the brain of a 40-year-old. The loss is mostly due to shrinkage of the prefrontal cortex, hippocampus, and cerebral cortex, all of which are important for complex thought processes. Over time there is also a decline in the levels of neurotransmitters, changing hormones, deteriorating blood vessels, and impaired circulation of blood glucose. All of this makes it harder to recall words and names, focus on tasks, and process new information. There are positive changes, too; larger vocabularies, greater knowledge of the depth of meaning of words, accumulated experience. Whether and how older adults apply accumulated knowledge is all part of cognitive resilience, and individuals differ quite a lot in this dimension.

The Long Beach Longitudinal Study tracks the cognitive ability of healthy individuals. The principle finding, an important one, is that physical changes in the brain barely affect our day-to-day lives. For example, one part of the study challenges people to remember groups of words for as long as they live. Elizabeth Zelinski, gerontologist at USC, summarized the result this way; people forget roughly one tenth of a word per year. In other words, if you learned a series of 17 words in year one, it would take 10 yrs for you to only be able to recall 16, a decline most people wouldn’t notice or be concerned about. The brain’s plasticity keeps our memories delightfully intact.

Some people do suffer severe cognitive loss as they age, Alzheimer’s Disease being a major cause, and dementia due to alpha-synuclein is another. Serious dementia effects 5.6% of the world population. It is not clear why these people are vulnerable in ways that the majority of healthy adults are not, but the prospect of mental loss is frightening and fuels enormous effort to find causes and cures. On the flip side,SuperAgers seem resistant to dementia. Although it’s normal for brainpower to decline, it’s not inevitable and some people defy the common assumption that cognitive decline is a natural part of aging. There is plenty to learn from both groups. Finding the causes of both mental decline and mental resilience is a sticky problem. Jonathan Hakun at Penn State, points to the root stickiness with the phrase, “nothing’s really entirely normal.” I call it a uniqueness problem, when it comes to how our brains change over time, everyone is unique. There is no reason to think that any two of us are changed the same way by the same experience. Scientific paradigms fall down when confronted with enormous variation, and science that depends on statistical inference really struggles with it.

What do we know about SuperAgers?

Important insights into the cognitive resilience puzzle come from functional brain imaging. For example, it has been shown that when old adults and young adults are asked to perform the same cognitive task, both groups can complete it but their brains appear to function completely differently. Old and young apply different brain pathways, in different sequences, and at different speeds. According to Jonathan Hakun, “the functional changes are wild”. Reminds me of the euphemism, “there are many ways to verb a noun.”

Emily Rogalski and her team at Northwestern track the performance of SuperAgers over time, hoping to discover what they have in common. They find that they are a diverse group spanning socioeconomic backgrounds, education, and ethnicities. Some have gone through significant trauma, some drink, some exercise regularly, and some need walkers to get around, but they are similar at deeper levels. For one, brain scans show that their frontal cortex, an area involved in executive function, is a lot larger than in normal peers. It shrinks, but at a much slower rate. Remarkably, they also have more of a specific type of neuron, called Von Economo neurons, or spindle neurons. These large, rapidly conducting cells make enormous numbers of synaptic connections over long distances and are found mostly in animals known to express empathy. Spindle neurons play a role in social interactions, social intelligence, and awareness. To top that off, SuperAgers tend to have stronger and larger social networks than their peers; more friends and family connections. It is not known how a rich social life contributes to cognitive resilience, but loneliness and social isolation are early signs of dementia. This builds on research showing links between psychological well-being and lower risk of Alzheimer’s.

Researchers at Northwestern also found what might be a brain signature for cognitive SuperAgers. The cingulate cortex, a region important for integrating information related to memory, attention, cognitive control, and motivation is significantly larger in SuperAgers, resembling the same region in middle-aged people. Using MRI to measure brain volume over an 18-month period, they found a 2.24% loss of volume in the brains of cognitively normal adults versus a 1.06% loss in the SuperAger group. In short, SuperAgers lose brain mass at a significantly slower rate, and some important regions are especially protected. At Massachusetts General Hospital, researchers are studying younger SuperAgers, people between 60 and 80 who have memory recall similar to 18 to 32-year-olds. They’ve identified increased thickness in two neural networks that connect areas important to memory processing. They also found that SuperAgers have a larger hippocampus. The hippocampus plays a key role in memory and recall and this could explain, in part, their greater memory capacity.

PET scan (positron emission tomography) is another tool for studying the brain by imaging metabolic activity. The brain has an ultra-high metabolic rate that changes dynamically on a fine spatial scale in response to changes in neural activity. Rapid metabolism produces waste products that can damage cells if they accumulate. Normally, waste products are swept away by the glymphatic system which plays a maintenance role, ensuring efficient elimination of soluble proteins and metabolites. It works primarily during sleep and is largely disengaged during wakefulness. The biological need for sleep rests partly on the need to eliminate neurotoxins accumulated during the day. The Blood Brain Barrier (BBB) also plays a maintenance role by regulating the kinds of molecules exchanged between the brain and the blood, and by preventing viral access (for example SARS-CoV-2) to the brain. Breakdown of the BBB results in neuroinflammation and oxidative stress leading to mental decline. It is not known, but possible, that SuperAgers have particularly strong glymphatic and BBB systems, and maybe good sleep habits, too.

Two toxic proteins that accumulate with age get a lot of attention: amyloid-beta and tau. They are associated with the death of neurons and onset of dementia and are the basis for the amyloid hypothesis, which asserts that the problem in Alzheimer’s disease (AD) is a failure in protein regulation resulting in aggregates of amyloid protein. Healthy cells keep proteins in balance, but there are a number of stressors that accrue with age that can disturb the balance and allow misfolded proteins to accumulate. This is a particular problem for long lived cell types, like neurons. However, there are some perplexing findings that need to be explained. Claudia Kawas (UC Irvine’s 90+ Study) provides this example. Some SuperAgers show the physical pathology characteristic of Alzheimer’s Disease. “Everyone thinks there’s this really strong correlation — if you have plaques and tangles, you have dementia, and if you don’t have plaques and tangles, you shouldn’t have dementia,” she says. In fact, both of those things are often not true. She explains, “You can super age by not getting Alzheimer’s pathology, or you can super age by getting it and somehow managing to not fall sick because of it.” This brings up a key question: Are resilient brains those that are able to cope with stressors that should impair function? Or are the resilient ones those that are somehow resistant to them in the first place? In a way, it doesn’t matter how the brain stays healthy—just that it does. It is good to remember that brains have lots of redundant systems, so if one stalls out, others are able to compensate.

How do Some People Avoid Mental Decline?

Environment may be a factor in mental resilience, social factors and healthy living, too. There is also evidence that enriching experiences, like advanced education and mind-challenging occupations, offer protection from mental decline. The concept is called cognitive reserve, the idea being that stimulating experiences lead to changes in brain structure and function that protect against dementia. It contends that a person can acquire mental skills that help to fend off assaults from aging or disease that would devastate others. No one knows how but stimulating activities that build strong networks of connected neurons early in life, classroom learning and problem-solving skills are examples, make it easier to adapt to the changes that come with age. Only a correlation, but curious. It may involve epigenetic changes to the genome such that cognitive skills learned through experience become part of a set of mental tools that are retained and can be applied in new contexts much later. The Longevity Genes Project at Albert Einstein College of Medicine is looking into genetic and epigenetic factors linked to cognitive resilience.

Cognitive reserve is a mystery that might involve neuro stem cells. The nervous system harbors resident stem cells that have the ability to self-renew, proliferate, and differentiate into new neurons and support cells well into adulthood. For example, new neurons are added to parts of the hippocampus, a region important to memory processing. But with age, the ability to create new hippocampal neurons steadily declines and with it, memory. In general, age is associated with a significant decrease in neurogenesis. Interestingly, there are suggestions that the decrease is less pronounced in the brains of SuperAgers. New research using genetic engineering shows potential for reactivating neuro stem cells in aged animals. It seems straight out of Sci-Fi, but you can imagine what this would mean if it can be applied to people

Age-dependent changes in neuroglia may also play a role in cognitive reserve. Our brains are optimized for learning and that involves adding, subtracting, and strengthen synapses. These are glial functions, and they rely on glial stem cells present in the brain. The cool thing is that the health of neuroglia is subject to environmental factors and lifestyle (diet, education, mental and physical activity). Cognitive super aging may depend in part on healthy neuroglia.

Microglia and neuroinflammation

The brain has its own immune system formed by cells called microglia. Their job is to identify and eliminate invaders, like viruses and dying neurons. Microglia also interpret chemical signals from the peripheral immune system. When activated, they initiate physiological and behavioral responses to manage infection or injury. With aging, however, microglia become prone to overreaction. The result is increased pro-inflammatory cytokines in the brain and a kind of chain reaction that damages brain tissue. In the age of pandemic we have come to know this as cytokine storm, and it is quite important. The major difference in microglial response between young and old individuals is that the response is amplified and prolonged in aged people, causing exaggerated neuroinflammation, sickness behavior, depressive-like emotions, and cognitive loss. It may be that SuperAgers are less susceptible to microglial over reaction. A new diagnostic tool using markers of inflammation is being developed by researchers at Stanford and the Buck Institute to assess one’s biological age vs. chronological age. “Every year, the calendar tells us we’re a year older,” said David Furman, the senior author. “But not all humans age biologically at the same rate. You see this in the clinic — some older people are extremely disease-prone, while others are the picture of health. This reflects in large part differing rates at which people’s immune systems begin to degrade.” They call their method iAge. The idea is that as we grow older, our bodies experience chronic low-grade inflammation as cells become damaged and emit inflammatory cytokines that activate the immune system. This damages more cells, and so on. People who have a healthy immune system are able to control the situation and limit neurodegeneration to some extent, whereas others will age faster. Their method, The inflammatory aging clock (iAge), tracts the health of the immune system by measuring blood levels of a biomarker, CXCL9, and comparing it to a large database. The marker is a cytokine that begins to increase quickly after age 60. The research team tested the method using blood samples from 29 SuperAgers aged 99-100 yrs in Bologna, Italy, and comparing their inflammatory ages with those of 50- to 79-year-olds. The SuperAgers had inflammatory ages averaging 40 years younger than their calendar age. One 105-year-old man had an inflammatory age of 25. It is tantalizing to think this is related to cognitive resilience, especially because inflammation is known to cause neurodegeneration and is readily treatable. The iAge tool could help doctors determine who will benefit from intervention, potentially extending the number of years a person lives in good mental health.

I’m fascinated by places where people live long lives and thrive well into their golden years: Blue Zones. Places like this are found everywhere; in Sardinia, Italy; Ikaria, Greece; Okinawa, Japan, for example. The tiny country of Monaco is the second smallest in the world but has the highest average life expectancy, 89.4 years. In the US it is 78.7 years. What can these people tell us about super aging? There is no magic formula, but long-lived populations do share some common habits. Locals tend to eat balanced mostly plant-based diets, practice daily low-impact activities and move naturally — gardening is an example — focus on family, and carve out plenty of time to enjoy life, for example a healthy love of wine and sunny weather, and a low stress lifestyle. The list gives me places to start on my quest for cognitive resilience, especially useful because I live and work in society known for high stress. But after all of this, I still find myself asking: What sets these people apart? Because it seems that all that is required of us to function in the world is for our brains to change in an orderly way as a result of our experiences.

Consider this: “There is a fountain of youth: it is your mind, your talents, the creativity you bring to your life and the lives of people you love. When you learn to tap this source, you will truly have defeated age.” – Sophia Loren

-neuromavin