Some systems can only be described by very large numbers. Take the universe, for example. How many galaxies are in the known universe? The best estimate comes from the Hubble eXtreme Deep Field study which suggests somewhere between 100 and 200 billion, although other simulations put the number closer to 500 billion. These are big numbers; in scientific notation they are 1 – 5 x 10^11 (1-5 followed by 11 zeros). But if we go further and consider the number of stars in all those galaxies, we get to a very healthy, very big number. Assume each galaxy has one hundred billion stars (1×10^11; a conservative guess), then the number of stars in the known universe is possibly 1×10^22, and that is without even considering multiverses. What can compare?

The eXtreme Deep Field. (Credit: NASA, http://1.usa.gov/1eyxs46).

The eXtreme Deep Field. (Credit: NASA, http://1.usa.gov/1eyxs46).

ESO (European Sourthern Observagtory) produced a wonderful video about deep space. You will enjoy this: http://youtu.be/GsEQ7bcU0n4

Computer engineers deal with large systems, how large? IBM is building a computer based on it’s SyNAPSE chip. The device uses a network of elements, termed neurosynaptic cores, connected in a fashion loosely based on the long-distance wiring of the monkey brain and with cores clustered into brain-inspired regions (http://ibm.co/1zzFJAC).

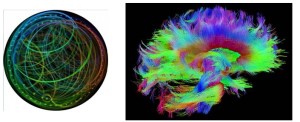

This is how IBM diagrams the concept (left), along with a diffusion spectrum image (DSI) highlighting the connections between points in the brain of a rhesus monkey. Only a small fraction of the connections are shown. To learn how images like these are made and interpreted, see http://bit.ly/1Ly5rgy.

Ray Kurzweil, currently at Google, summarized IBM’s progress to date (http://bit.ly/1Ly5rgy). Teaming up with Lawrence Livermore Lab, IBM achieved a prototype emulating 53×10^10 neurons and 1.37×10^14 synapses (~100 trillion). Below is the Blue Gene/Q Sequoia supercomputer they used with a rhesus monkey shown to scale in the foreground;

Thinking about supercomputers set me to wonder how big is the human brain? This is not a simple question because there are so many bases on which to make comparisons. Volume is out because supercomputers are just enormous hulks. One has to ask, what is the most meaningful unit of measure? For systems that deal in information, it might be the number of processing units and the connections between them. It also might have something to do with the fundamental mechanisms used to handle information, be they electrical, optical, chemical or other. There are other meaningful units, I am sure, but let’s take these as a starting point.

All nervous systems have a cellular design and information is transferred between cells at synapses. So how many synapses are there in the human central nervous system? Good estimates range between 100 trillion and 1,000 trillion; expressed in scientific notion the number is 1X10^14-15. A very large number of connections, on a par with IBM’s finest. Then again, how many neurotransmitters and transmitter receptors are there and how do they mix and match at individual synapses? This will add a couple of more orders of magnitude to the equation. All of this makes it clear that the human brain is a complicated system in the sense that a supercomputer or a universe is complicated, and large on a similar scale. I would argue, however, that the brain is, in addition and perhaps unlike the others, a complex system and I do not think that the number of synaptic connections gives a meaningful representation of information processing power. We have to add more dimensions because the brain does not run out of tricks when it runs out of synapses and the most important trick is probably the ability to change, i.e. plasticity. Synapses can be added and deleted, they can change strength in response to experience and new connections can be made over short and long distances and old ones broken, as well. This is the stuff of learning. New neurons can be added by the activation of neurostem cells, and in at least one part of the brain, the hippocampus (the transit point for memory consolidation and recall), this happens throughout life. That is part of learning, too. All of this plasticity occurs very rapidly, on a time scale of milliseconds to hours; a rate that leaves the cosmos and Moore’s Law in the dust.

We have talked of three big things, the universe, a supercomputer and the human brain. All three are complicated because of the enormous number of parts. In addition to being complicated, however, the brain is also complex because it is self-assembling and because it is made of tunable elements capable of changing dynamically in response to events both real and imagined. This is the beauty and the advantage of evolving biological systems.

If you are interested in big numbers read this mind spinning discussion by Alasdair Wilkins http://bit.ly/1hoAKJi. Look at infinityfringe.com as well.

-Neuromavin